Now the the size the compressed file is reduced by 8 times, than if we would’ve stored the bit-wise information in characters of zeros and ones, in a way readable by normal human eyes( you can still train your brain to read an encoded binary file if you want!). This helps keep the memory requirements independent of the size of file being compressed. The principle idea behind it is that it takes the information on the bit to be written one by one from a stream of strings (which are the character-wise huff-codes of the original file) and encodes them into an unsigned char 8 bits at a time.Īs an unsigned char has 8 bits, we store the encoding of our compressed file into these bit positions( chunks of 8 at a time) and then write this chunk byte by byte into the output file and clear the buffer. But as we know, our output compressed file will consist only of zeros and ones, it is therefore not wise to waste 8 bits of memory for representing only 0 or 1.Ī workaround for this is implemented in the BitwiseWrite class. This however, also means that a chunk of one byte(size of char) is the smallest we can go while interacting with our file. This is what enabled us to so conveniently read and write one char at a time in our text files. One important point to take note of is that C++ language has char as the fundamental data type. But our main file (and so the compressed one) has no limit on its size, so would like to optimize the memory used in this case as much as possible. As the dictionary would contain no more than 256 mapping, its maximum size is a constant( a few kBs ). This however is not to be the case with our (about to be) generated compressed file. As the output is a txt file, it is readable by Human brain. Next call to writeToDictionary() does precisely that. So we save this code mapping in a “dictionary.txt” file to be later used for decoding the compressed file as and when required. This dictionary of codes is what will be used for encoding, and then decoding the files. It builds a Huffman Tree from the freq arrays and generates codes by traversing the built Huffman Tree. Meanwhile we also calculate the totcharactersin it(use of which is discussed later) Once the frequency arrays is generated, we give a function call to the soul of our of our program, void generateHuffmanCodes(char data, int freq, int size) We use the following global variables: int freq, size=0 //actual number of elements stored in the freq array char arr We also add a pseudo EndOfFilecharacter (which I chose to be the character with ASCII value 129) with frequency exactly 1 ,the huff-code of which is to be appended at the end of our compressed file. Then we call the function fillFreqArray(fin) which as the name suggests reads from the input file and maps each distinct character present in it to its frequency. The file stream object fin is opened in input mode for the said file.

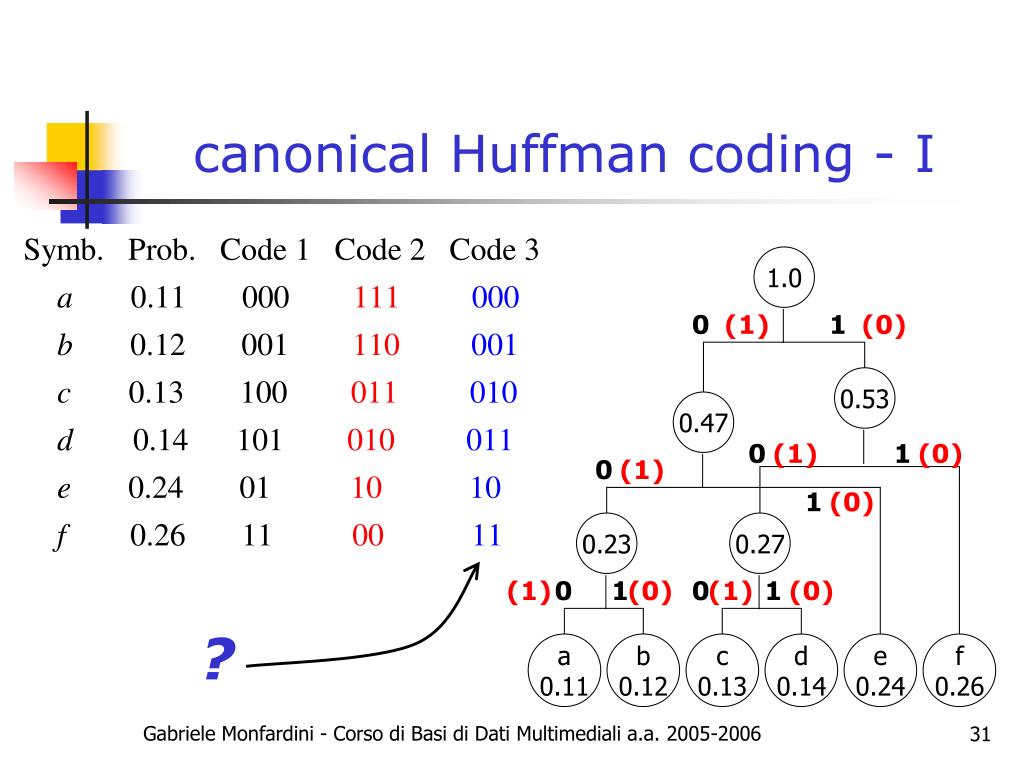

Lets from the int main() as any program execution starts at main(), and ends at main().įirst, we input the name of the file(along with extension) to be compressed. Now, assuming you’ve gotten familiar with the working principal of the algorithm, lets proceed on with the entirety of my code: Traverse the Huffman Tree and assign codes to characters.Ī detailed explanation of the working and general implementation can be found below as linked:.

Build a Huffman Tree from input characters.

There are mainly two major parts in Huffman Coding. See this for applications of Huffman Coding.

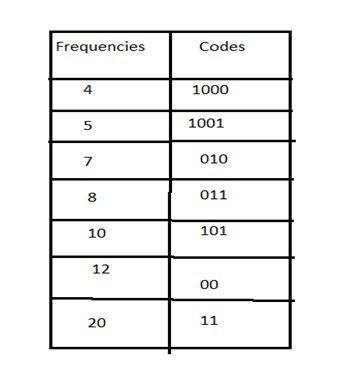

If the compressed bit stream is 0001, the de-compressed output may be “cccd” or “ccb” or “acd” or “ab”. This coding leads to ambiguity because code assigned to c is the prefix of codes assigned to a and b. Let there be four characters a, b, c and d, and their corresponding variable length codes be 00, 01, 0 and 1. Let us understand prefix codes with a counter example. This is how Huffman Coding makes sure that there is no ambiguity when decoding the generated bitstream. The variable-length codes assigned to input characters are Prefix Codes, means the codes (bit sequences) are assigned in such a way that the code assigned to one character is not the prefix of code assigned to any other character. The most frequent character gets the smallest code and the least frequent character gets the largest code. The idea is to assign variable-length codes to input characters, lengths of the assigned codes are based on the frequencies of corresponding characters. Huffman coding is a lossless data compression algorithm. Implementation of Huffman Coding for lossless text file compression

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed